LangBot provides a set of RESTful APIs for external services to integrate and automate management. These APIs are authenticated with API keys, which are a subset of the HTTP API.Documentation Index

Fetch the complete documentation index at: https://docs.langbot.app/llms.txt

Use this file to discover all available pages before exploring further.

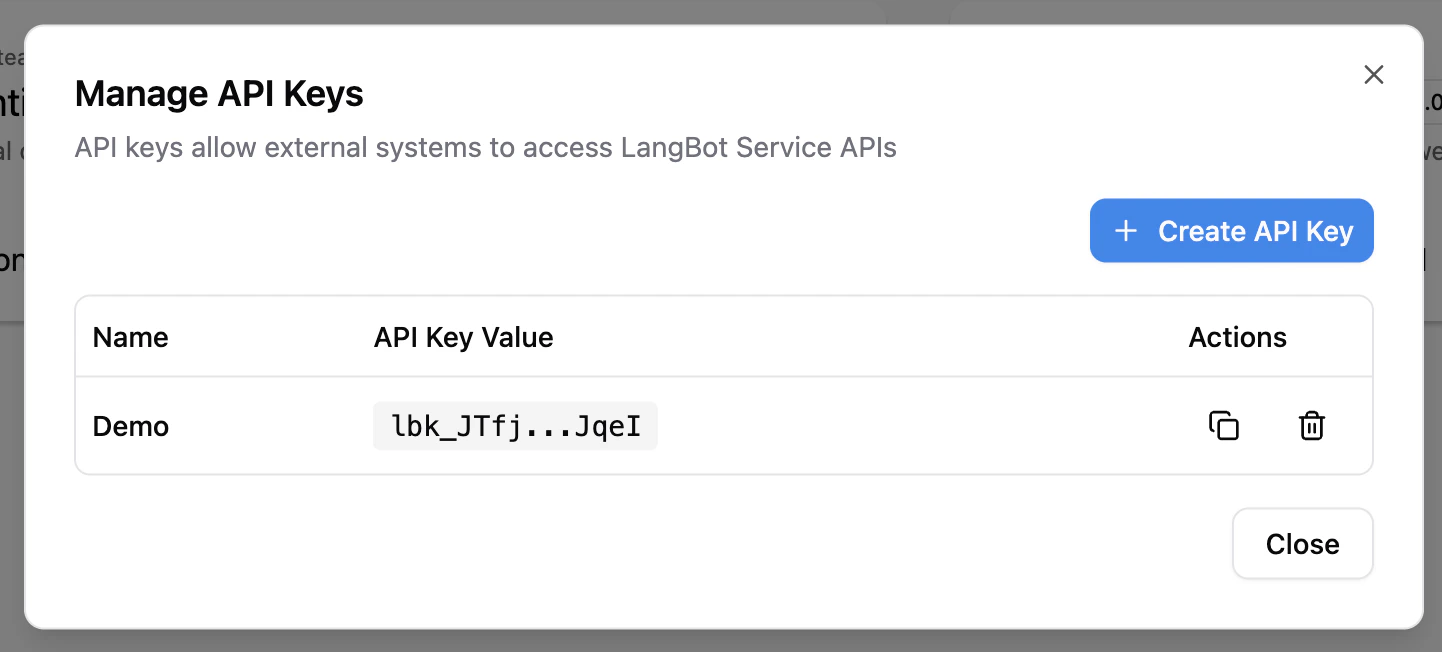

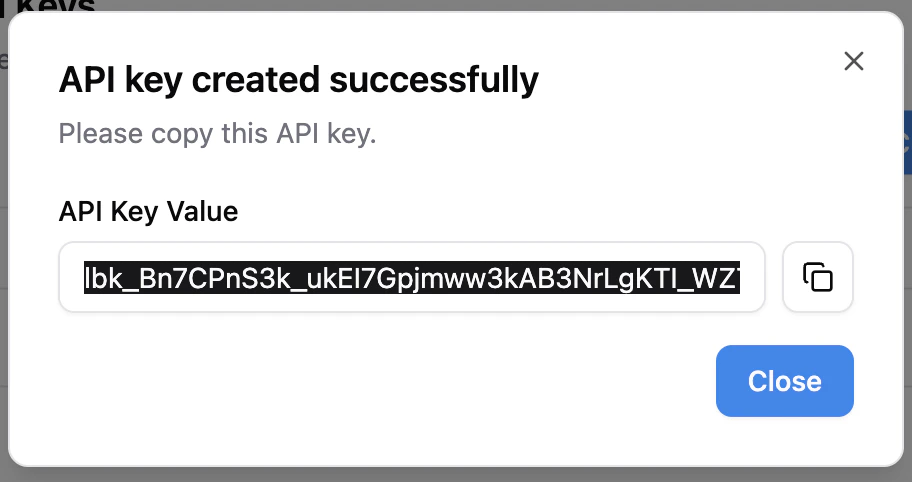

Get API Key

Click theAPI Keys button in the sidebar of the WebUI to create an API Key.

Authentication

All Service APIs support two authentication methods:- API Key: Via

X-API-Key: <your-key>orAuthorization: Bearer <your-key>header - User Token: Via

Authorization: Bearer <user-token>header (obtained after login)

Use API

The left sidebar lists all available APIs. You can fill in your instance address (the port address exposed by the backend, default ishttp://localhost:5300) and API Key, then click the Try it out button to call the API.

Core Concepts

Provider + Model Architecture

LangBot uses a Provider + Model two-layer architecture for AI model management:- Provider: Defines the API endpoint (

base_url), requester type, and API keys. A single provider can be associated with multiple models. - Model: Associated with a provider (via

provider_uuid), defining the specific model name and parameters. Models inherit the provider’s API endpoint and keys.

Requester

A requester defines the communication protocol with AI services. UseGET /api/v1/provider/requesters to list available requesters. Common requesters include:

openai-chat-completions— OpenAI-compatible chat completions API (also works with SiliconFlow, DeepSeek, etc.)anthropic-messages— Anthropic Claude APIopenai-embeddings— OpenAI-compatible embeddings API

Async Tasks

Some operations (plugin install/delete/upgrade) run asynchronously and return atask_id. You can track progress in the WebUI.